I’m working on a project at the moment which may potentially involve rendering video in a THREE.js environment.

The banner for this site has needed upgrades for some time, the CSS animation didn’t really do it justice, so over the weekend I’ve brushed up on video textures and post-processing fragment shaders by creating a real time ASCII shader.

Initially I did try to do this with pure JavaScript and canvas, based on this article, however I made some changes.

Firstly, the code on this article uses pixel data and iterates over that to establish average colours. This is very slow.

My first attempt was similar, but using a second canvas to downscale the original image, thus letting the hardware do the re-sampling as it scales the image down.

This provided a decent boost, however, when I plugged this into fillText I found that the text rendering was too slow to make this feasible in a full screen context.

In my second attempt, I created another canvas and pre-rendered each character here. I then used drawImage to copy characters from the character buffer to the destination canvas.

This was woefully slow compared to what I would expect from a sensible blitting operation, so this left me with only one option.

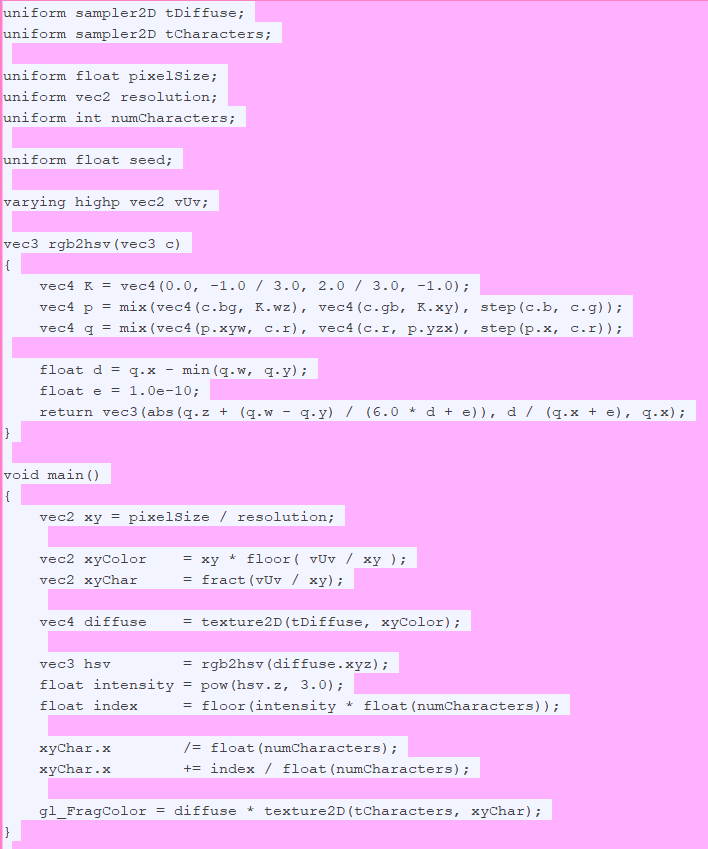

My third and final attempt uses THREE.js and a post-processing fragment shader in combination with the character buffer canvas.

The shader receives the video footage, and the character texture.

It performs a crude average based on THREE.js’s Pixel post-processing example, then converts the RGB average into HSV, from there the V channel is used to determine pixel intensity.

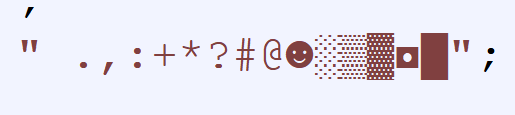

I dug out a modern version of the old DOS console font from the folks at int10h.org and hand picked characters to represent 16 different levels of intensity.

The shader figures out the character index based on intensity, and then sets the source rectangle on the character buffer accordingly. This is then multiplied with the pixel value from the video footage, and the result is a character which matches the original video in colour, with a variety of characters being displayed based on saturation / lightness of each pixelated region.

The result is the banner which you can see at the top of this page!